The Greatest Health Revolution

Charting the Real Reasons Infectious Diseases Faded into History

January 6, 2026

“The history of vaccination from its beginning to its present position is a refreshing illustration of the truth that medical science is human first and scientific afterwards… The refusal of the professional leaders to go back upon their mistake, when it was abundantly proved to be a mistake, has become an inherited obligation of hard swearing to successive generations. Things have now come to such a pass that anyone who undertakes to answer for Jenner and his theories, must shatter his own reputation for scientific and historical knowledge. Most of those who have a reputation to lose decline the challenge.” (1890)

— Dr. Charles Creighton, MD, Professor University of Cambridge, author of numerous writings, including History of Epidemics in Britain (vol 1 & 2), Bovine Epidemics in Man, Cowpox and Vaccinal Syphilis, Jenner and Vaccination: A Strange Chapter of Medical History, and Vaccination in the Encyclopædia Britannica in 1888

“More than twenty years ago I began a careful study of the subject of vaccination, and before I got through, I was forced to the conclusion that vaccination was the most colossal medical fallacy that ever cursed the human race. Few physicians attempt to investigate this subject for themselves. They have been taught to believe its efficacy. They have vaccinated because it was the custom and they were paid for it. They have supposed vaccination would prevent small-pox because the best authorities said it would, and they accept it without question.” (1888)

— Dr. A. M. Ross

“The fact that an opinion has been widely held is no evidence whatever that it is not utterly absurd; indeed, in view of the silliness of the majority of mankind, a widespread belief is more likely to be foolish than sensible.” (1929)

— Bertrand Russell, Philosopher

“By the time laboratory medicine came effectively into the picture the job had been carried far toward completion by the humanitarians and social reformers of the nineteenth century… When the tide is receding from the beach it is easy to have the illusion that one can empty the ocean by removing the water with a pail.” (1959)

— René Dubos

The Unquestioned Dogma of Vaccination

Despite recent, highly polarizing events at HHS—such as the appointment of Robert F. Kennedy Jr. as Secretary, which was celebrated by certain members of society and met with consternation by others—vaccination remains the unchallenged, go-to solution, often deployed without even the hint of a critical question. On August 5, 2025, HHS Secretary Robert F. Kennedy Jr. announced the termination of 22 mRNA vaccine development projects under the Biomedical Advanced Research and Development Authority (BARDA), totaling $500 million in funding, citing data showing that these vaccines were ineffective in protecting against upper respiratory infections such as COVID-19 and influenza. The reallocated funds were shifted to support an alternative vaccine project. As reported on May 1, 2025, the U.S. Department of Health and Human Services (HHS) and the National Institutes of Health (NIH) announced the launch of the “Generation Gold Standard” program, a $500 million initiative to develop a universal vaccine platform. Using a beta-propiolactone (BPL)-inactivated, whole-virus approach, the program seeks to provide broad protection against multiple strains of pandemic-prone viruses, including influenza and coronaviruses. Ultimately, the prevailing orthodoxy dictates that it’s not a matter of whether vaccination itself is safe and effective or the correct course of action, only which particular technology to pursue.

The near-religious faith in infectious disease management through vaccines and antibiotics has been deeply embedded in our societal consciousness. Whenever a discussion involves a supposed contagious agent, the only real solution proposed is vaccination, as we saw during the COVID-19 era. In addition, the notion that vaccines are inherently “safe and effective” is a near-Pavlovian response when the word is invoked. This mantra hearkens back to when Edward Jenner declared over two centuries ago, without any rigorous evidence, that his invention of vaccination was to be given with “perfect ease and safety” and would make “rendering through life the person so inoculated perfectly secure from the infection of small-pox.”

For decades, there have been endless disputes about the health of vaccinated versus unvaccinated individuals, potential links to neurological damage or autism, the toxicity and safety of vaccine ingredients, long-term immune system effects, and the ethical and regulatory implications of mandatory vaccination, as well as catastrophes such as the Swine Flu Fiasco of 1976 and the Cutter disaster. The fact that Edward Jenner’s statements were demonstrably false virtually from the outset, coupled with these long-standing arguments, makes it all the more remarkable that the word “vaccination” has continued to hold a near-divine status—regardless of what ingredients it contains, who manufactures it, the absence of long-term safety testing, the presence of conflicts of interest, or the mounting evidence of harm.

These disagreements will not end anytime soon, much like other enduring debates in the public sphere—from the best diet, the amount of alcohol (if any) that is safe to drink, and the benefits or harms of coffee to the impact of red meat, the value of supplements, and broader lifestyle questions such as exercise routines, sleep requirements, and screen time.

On May 7, 2025, the NIH–CMS Data Partnership announced its plan to create a real-world data platform that integrates claims, electronic medical records, and consumer wearable data to investigate the root causes of autism spectrum disorder (ASD) and inform long-term strategies.

To believe this system will ever definitively resolve the issue is a form of magical thinking—or perhaps more cynically, a way to placate the public and continue business as usual. Powerful institutional and ideological forces are likely to do everything in their power to delay, obfuscate, or manipulate the findings; even carefully conducted studies are destined to be dismissed, misrepresented, or weaponized to support pre-existing narratives rather than pursue the truth. Given the deeply polarized nature of the debate, any result released after years of work and the investment of countless dollars—if a clear and worthwhile result is ever produced—will be immediately contested, with each side convinced the conclusion was incorrect, flawed, or an out-and-out lie.

But there is a far simpler, cheaper, definitive, and faster way to assess the impact of infectious diseases and vaccination—a method that cuts through the noise: examining existing mortality data. Preventing deaths, after all, is the main claim to fame of vaccines, and you might imagine this critical data is something policymakers have relied upon for decades. You might also assume that this data is featured prominently on the websites of institutions such as the CDC (Centers for Disease Control), the NHS (National Health Service), and the AAP (American Academy of Pediatrics). However, you would likely be sorely disappointed. While the raw data exists, it is rarely presented in a clear, historical context. Few charts that vividly illustrate trends—such as pre-vaccine versus post-vaccine era mortality—are easily accessible. Instead, this crucial information is often buried in detailed reports and surveillance databases, fragmented across countless pages, or locked away within academic publications.

United States Mortality Data (1900–1965): A Story Told in Trends

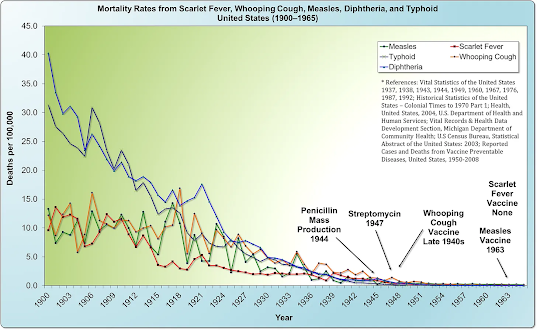

While this essential data is not prominently displayed, it can be accessed through some research. For example, by utilizing historical United States Vital Statistics data, we can construct a mortality chart tracking deaths from diseases like scarlet fever, whooping cough, measles, diphtheria, and typhoid over a significant period: 1900 to 1965.

The story this chart tells is nothing short of revolutionary. What was immediately shocking—and what completely dumfounded me nearly 30 years ago—was the revelation that the measles death rate had already fallen by a staggering 98% before the introduction of the first vaccine in 1963. Like most people, I had assumed that measles mortality would be high right up until the vaccine’s arrival, after which it would drop precipitously. But that is not what the data revealed; it was the complete opposite of what I, and nearly everyone else who saw this chart for the first time, expected.

Another critical observation is that the mortality rate from whooping cough had already declined by an astonishing 95% by the time its vaccine was introduced in 1948. Once again, this reality contradicted my initial assumption that deaths would have remained high until the vaccine’s arrival. Instead, as with measles, the vast majority of the decline occurred long before widespread vaccination.

Furthermore, the data reveal an even more compelling point: two other diseases noted on the chart, scarlet fever and typhoid, declined to near-zero levels in the absence of any vaccine at all.

The advent of antibiotics, such as the mass production of penicillin in 1944 and streptomycin in 1947, occurred well after the overwhelming majority of the decline in mortality had already taken place. This timeline powerfully reinforces the conclusion that factors other than modern medicine were the primary drivers of this trend. This view is strongly supported by a seminal study published in Pediatrics in December 2000, which concluded:

“…nearly 90% of the decline in infectious disease mortality among US children occurred [from 1900] before 1940, when few antibiotics or vaccines were available.”

England and Wales Mortality Data (1838-1978): An Even More Dramatic Decline

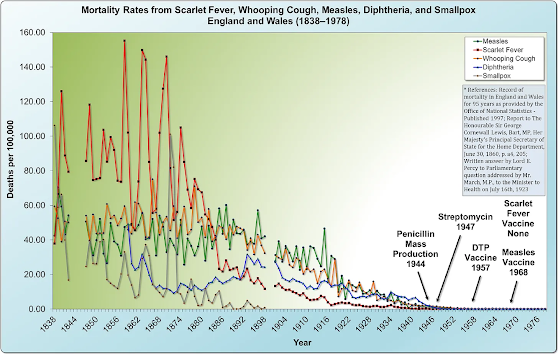

The evidence from the United States is compelling, but the story becomes even more definitive when we look across the Atlantic. Unlike the United States, England and Wales began gathering data 62 years earlier, in 1838.

By synthesizing data from the Office of National Statistics and other historical sources, we can construct a comprehensive mortality chart that tracks death rates from major infectious diseases—scarlet fever, whooping cough, measles, diphtheria, and smallpox—over a sweeping 140-year period: from 1838 to 1978.

The trend revealed by this data is even more extraordinary than that of the United States. Mortality from these five diseases was massive throughout the mid-1800s, beginning a sustained and dramatic decline around 1875. The scale of this pre-vaccine improvement is staggering: the death rate from whooping cough had fallen by over 99% before the vaccine’s national introduction in 1957. Even more mind-boggling, the mortality rate from measles had declined by over 99.9%—effectively nearly 100%—prior to the national rollout of its vaccine in 1968.

Perhaps most strikingly, scarlet fever—a far deadlier killer than either measles or whooping cough in the 19th century—declined to near-zero levels without any vaccine ever being developed. Once again, the advent of antibiotics occurred only after the vast majority (roughly 99%) of the mortality reduction had already been achieved.

The Overlooked Revolution: Sanitation, Nutrition, and the Fall of Mortality

The trajectory is key: The direction and magnitude of the trend are unmistakable. The death rate was falling precipitously for all infectious diseases due to profound public health and societal advancements, including better nutrition, sanitation, electrification (which improved food safety through refrigeration and reduced indoor air pollution from oil lamps), and improved transportation, which began to replace urban horse populations and their associated waste. Furthermore, the abandonment of harmful medical practices like bloodletting and toxic medications containing mercury or arsenic reduced iatrogenic harm, while social reforms like the implementation of labor and child labor laws, the closing of dreadful basement slums in favor of better housing, and a general rise in the standard of living all contributed to stronger public health. This collection of factors established a powerful, pre-existing downward trend in mortality.

As astutely observed by W. J. McCormick, M.D., in the 1951 issue of the Archives of Pediatrics, the most significant factor in the historical decline of infectious diseases was not medical intervention but what he termed an “unrecognized prophylactic factor.” McCormick’s analysis compellingly argues that while medical advances are celebrated, they often arrive after the most dramatic reductions in incidence and mortality have already occurred. He posits that a broader, underlying force was primarily responsible for the improvement in public health.

This perspective challenges the conventional narrative that attributes the decline solely to vaccines, antibiotics, and specific public health measures. Instead, it forces us to consider the profound role of socioeconomic and environmental improvements—such as better nutrition, less crowded housing, improved sanitation, cleaner water, and higher standards of living—which collectively strengthened human immunity.

“The usual explanation offered for this changed trend in infectious diseases has been the forward march of medicine in prophylaxis and therapy but, from a study of the literature, it is evident that these changes in incidence and mortality have been neither synchronous with nor proportionate to such measures. The decline in tuberculosis, for instance, began long before any special control measures, such as mass x-ray and sanitarium treatment, were instituted, even long before the infectious nature of the disease was discovered. The decline in pneumonia also began long before the use of the antibiotic drugs. Likewise, the decline in diphtheria, whooping cough and typhoid fever began fully years prior to the inception of artificial immunization and followed an almost even grade before and after the adoption of these control measures. In the case of scarlet fever, mumps, measles and rheumatic fever there has been no specific innovation in control measures, yet these also have followed the same general pattern in incidence decline. Furthermore, puerperal and infant mortality (under one year) has also shown a steady decline in keeping with that of the infectious diseases, thus obviously indicating the influence of some over-all unrecognized prophylactic factor.”

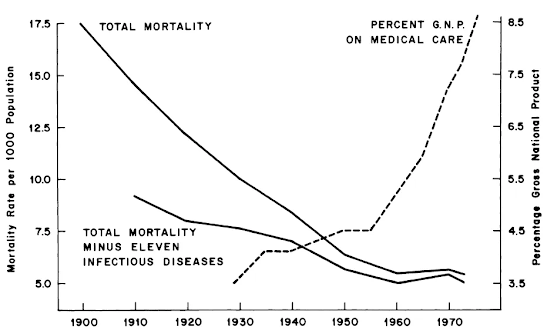

The 1977 study by epidemiologists John B. McKinlay and Sonja M. McKinlay presented a groundbreaking and contrarian analysis of 20th-century U.S. mortality data. Their rigorous investigation led them to a conclusion that contradicted the mainstream view: modern medical interventions, including vaccines and antibiotics, played a surprisingly minor role in the historic reduction of death rates since 1900.

Their work demonstrated that for the majority of infectious diseases, the most significant decline in fatalities had transpired prior to the development of specific medical treatments. The research suggests that medical tools were implemented after broader societal advancements had already accomplished the heavy lifting.

“In general, medical measures (both chemotherapeutic and prophylactic) appear to have contributed little to the overall decline in mortality in the United States since about 1900—having in many instances been introduced several decades after a marked decline had already set in and having no detectable influence in most instances. More specifically, with reference to those five conditions (influenza, pneumonia, diphtheria, whooping cough, and poliomyelitis) for which the decline in mortality appears substantial after the point of intervention—and on the unlikely assumption that all of this decline is attributable to the intervention… it is estimated that at most 3.5 percent of the total decline in mortality since 1900 could be ascribed to medical measures introduced for the diseases considered here.”

This minimal figure of 3.5% stands as a powerful challenge to the conventional account of medical history. The McKinlays’ analysis suggests a need to reorient our understanding of health progress, emphasizing the paramount importance of foundational public health and socioeconomic conditions over purely clinical, after-the-fact interventions. Their research remains a critical, evidence-based counterpoint in discussions of health policy and investment.

Severe Disease Becomes Mild: A Forgotten Historical Fact

The historical data reveal a dual phenomenon: as mortality rates from infectious diseases plummeted, so too did the perceived severity of these diseases. Decades before widespread vaccination, the terrifying specters of whooping cough and measles were transforming into milder childhood ailments, a trend observed and documented by physicians in the field. This gradual attenuation of disease severity further challenges the narrative that medical intervention was the primary driver of improved health outcomes.

By the 1950s, the experience of measles had shifted so dramatically that a British GP could confidently state its harmlessness was a matter of common understanding. The disease was seen not as a threat, but as an inevitable and manageable part of childhood:

“In the majority of children the whole episode has been well and truly over in a week… In this practice measles is considered as a relatively mild and inevitable childhood ailment that is best encountered any time from 3 to 7 years of age. Over the past 10 years there have been few serious complications at any age, and all children have made complete recoveries. As a result of this reasoning no special attempts have been made at prevention even in young infants in whom the disease has not been found to be especially serious.”

Similarly, whooping cough (pertussis) was increasingly characterized by its mild presentation. Research in general practice populations confirmed that the classic, severe portrayal was no longer the norm, a fact that changed how doctors approached diagnosis and how parents were counseled:

“Most cases of whooping cough are relatively mild. Such cases are difficult to diagnose without a high index of suspicion because doctors are unlikely to hear the characteristic cough, which may be the only symptom. Parents can be reassured that a serious outcome is unlikely. Adults also get whooping cough, especially from their children, and get the same symptoms as children.”

This well-documented change in the natural history of these diseases led some in the medical community to openly question the necessity of universal vaccination policies. The cost-benefit calculus, for some, no longer seemed to favor mass intervention:

“…it may be questioned whether universal vaccination against pertussis is always justified, especially in view of the increasingly mild nature of the disease and of the very small mortality. I am doubtful of its merits at least in Sweden, and I imagine that the same question may arise in some other countries. We should also remember that the modern infant must receive a large number of injections and that a reduction in their number would be a manifest advantage.”

The epidemiological trends bore out these clinical observations. The decline in whooping cough incidence and mortality was a firmly established trajectory years before mass vaccination campaigns began, suggesting the vaccine was introduced into a landscape where the disease was already receding:

“There was a continuous decline, equal in each sex, from 1937 onward. Vaccination [for whooping cough], beginning on a small scale in some places around 1948 and on a national scale in 1957, did not affect the rate of decline if it be assumed that one attack usually confers immunity, as in most major communicable diseases of childhood… With this pattern well established before 1957, there is no evidence that vaccination played a major role in the decline in incidence and mortality in the trend of events.”

Together, these accounts paint a compelling picture: the conquering of childhood diseases was a complex process involving a profound reduction in both lethality and severity, driven by deep-seated societal changes that began long before the advent of vaccines.

Copyright © Madge Waggy